Black Swans and Fat Tails: Why Complexity Guarantees Surprises

Complex systems don't just fail—they guarantee catastrophe. Discover why engineering's biggest disasters share a mathematical pattern we refuse to accept.

On January 28, 1986, seven astronauts died because a rubber seal became brittle at 31°F. Engineers at Morton Thiokol had warned management exactly 24 hours earlier: launching below 53°F violated their safety parameters. The memo existed. The data existed. The warning existed. Yet NASA's launch decision chain contained 534 critical interfaces, and the warning dissolved somewhere between the Thiokol teleconference and NASA's final go-order.

Here is the mathematical truth that haunts every megaproject manager: as system complexity increases linearly, potential failure modes increase exponentially. The Boeing 737 MAX contained approximately 14 million lines of code. Fukushima Daiichi had 4,437 documented safety vulnerabilities. When Nassim Taleb described "fat tail" distributions in complex systems, he was documenting a law of nature — one that engineering culture systematically ignores.

In 1984, sociologist Charles Perrow published a theory that should have transformed engineering practice. He called it Normal Accidents Theory, and its central claim was devastating: in sufficiently complex systems with tightly coupled components, accidents are not just possible — they are inevitable.

Perrow identified two dimensions that determine system vulnerability:

1. Interactive Complexity: Systems where components interact in unexpected, non-linear ways. Think of a chemical plant where a failed valve triggers a temperature spike, which triggers an automated response, which inadvertently redirects flow to a storage tank that was already near capacity.

2. Tight Coupling: Systems where processes happen too quickly for human intervention, with little slack or buffer. Once a failure cascade begins, there's no time to diagnose, deliberate, or disconnect.

When both conditions are present, Perrow argued, the system enters a state where no amount of safety protocols can prevent catastrophe. The question shifts from "whether" to "when."

[!INSIGHT] The probability of an accident in a complex, tightly coupled system approaches 1 over time. This is not pessimism — it's mathematical necessity. A system with 10,000 components, each with 99.99% reliability, still has a 63% chance of at least one component failure in any given operational cycle.

Consider the math: if each component has a failure probability $p$, and the system has $n$ components, the probability of at least one failure is:

$$P(\text{failure}) = 1 - (1-p)^n$$

As $n$ grows large, even infinitesimally small $p$ values produce near-certain failure. Complexity doesn't just increase risk — it multiplies it through interaction effects that no risk matrix can capture.

The Three Mile Island Pattern

In 1979, Three Mile Island experienced a partial meltdown that Perrow would later call a "textbook normal accident." The sequence:

- A minor relief valve stuck open (mechanical failure)

- Operators misread a status indicator that showed "valve closed" (the indicator actually showed power to the valve, not valve position)

- Automated systems correctly injected emergency coolant

- Operators, believing water levels were too high, manually overrode the automatic cooling

- Within two hours, the reactor core was exposed

No single failure caused the accident. The relief valve failure was recoverable. The indicator design flaw was manageable. The operator training gap was addressable. But the interaction of all three, in a tightly coupled system with no time for reflection, created catastrophe.

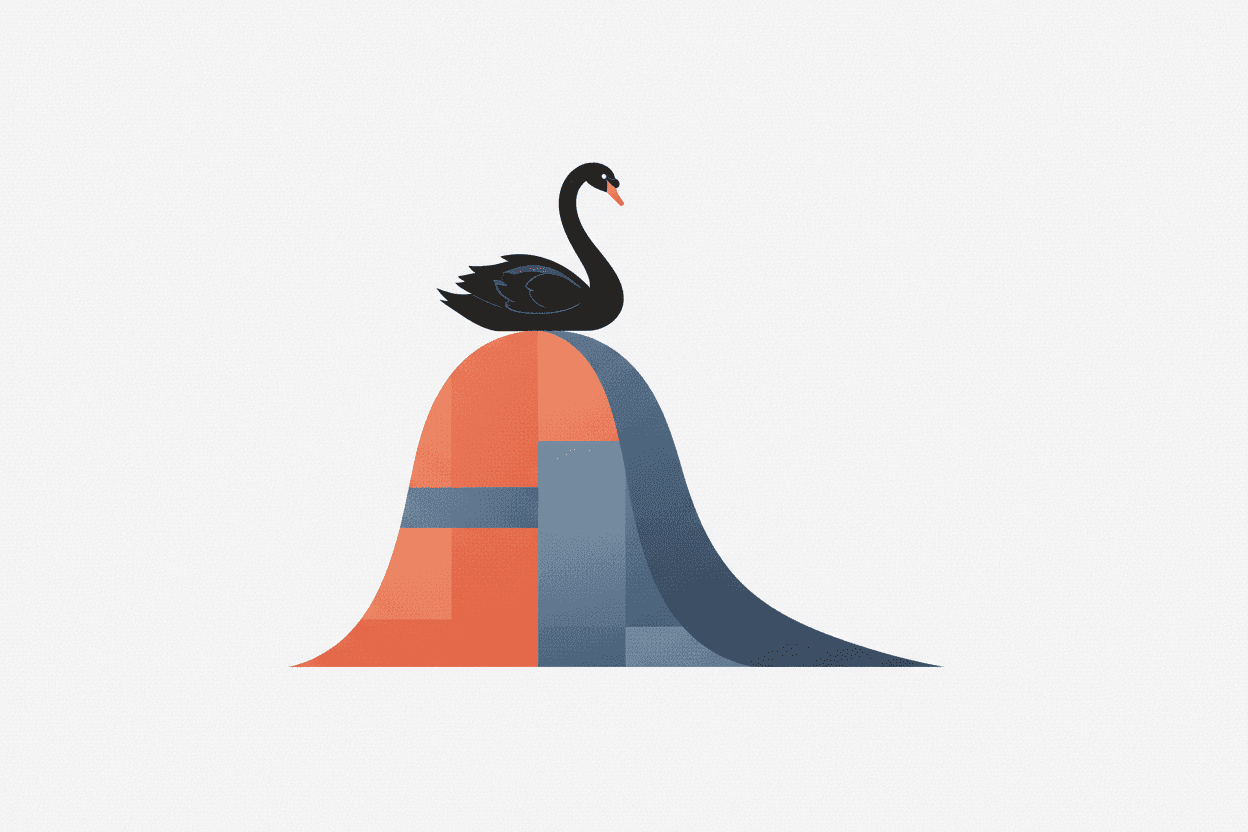

Fat Tails: The Statistical Reality We Ignore

Standard risk models in engineering assume Gaussian distributions — the familiar bell curve where extreme events are vanishingly rare. If component failure rates follow a normal distribution with mean $\mu$ and standard deviation $\sigma$, the probability of a 5-sigma event (a "1 in 3.5 million" occurrence) is essentially zero.

But complex systems don't follow Gaussian statistics. They follow power-law distributions with "fat tails" — where extreme events occur far more frequently than intuition suggests.

“"The supreme trick of the Black Swan hunter is to turn the unexpected into the expected”

In a power-law distribution, the probability of an event of size $x$ is proportional to $x^{-\alpha}$, where $\alpha$ is the tail exponent. For financial markets, $\alpha \approx 3$. For earthquakes, $\alpha \approx 2$. For megaproject failures, studies suggest $\alpha$ ranges from 1.5 to 2.5.

What does this mean practically? In a Gaussian world, a disaster 10x larger than your worst observed event is astronomically unlikely. In a fat-tailed world, it's merely uncommon — and must be planned for.

Empirical Evidence: The Megaproject Data

Bent Flyvbjerg's research at Oxford has documented the fat-tailed reality of megaprojects:

| Project Type | Average Cost Overrun | Frequency of "Black Swan" Overruns (>50%) |

|---|---|---|

| Rail | 44.7% | 14.3% |

| Bridges/Tunnels | 33.8% | 9.5% |

| Dams | 71.3% | 21.4% |

| Nuclear Plants | 207% (median) | 42.9% |

“[!INSIGHT] Nuclear power plants exhibit the fattest tails of any infrastructure class. The median cost overrun exceeds 200%, and nearly half experience true "black swan" levels of budget destruction. This is not coincidence”

Case Study: Three Disasters, One Pattern

Fukushima Daiichi (2011)

The Fukushima accident report identified 4,437 known safety vulnerabilities before the tsunami. The plant's design assumed a maximum tsunami height of 5.7 meters. Historical records showed a tsunami exceeded 38 meters. The vulnerability was documented. The historical data existed.

But here's the critical insight: even if TEPCO had addressed the 100 most critical vulnerabilities, the remaining 4,337 would have included the one that mattered — the placement of backup generators in the basement, where floodwaters destroyed them.

Black Swan multiplier: The interaction between an external event (tsunami), a design assumption (generator placement), and a regulatory failure (no mandate to re-evaluate historical maximums).

Challenger (1986)

The Rogers Commission identified the direct cause (O-ring erosion), but the deeper pattern reveals itself in the decision chain:

- 13 safety reviews between 1977-1986 documented O-ring erosion

- 4 separate engineering teams raised concerns about cold-weather launches

- 3 different NASA centers had access to the data

- 1 management structure had authority to halt launch

The warning didn't fail because it was wrong. It failed because it traveled through a system with high interactive complexity and tight coupling to launch schedules.

“[!NOTE] A 1988 follow-up study found that NASA's organizational structure contained "fatal flaws" that made it 100x more likely to ignore safety warnings under schedule pressure. The probability wasn't random”

Boeing 737 MAX (2018-2019)

The MCAS system contained a single point of failure: one angle-of-attack sensor feeding data to software that could push the nose down automatically. But the deeper pattern matches Perrow's theory:

- Interactive complexity: MCAS interacted with autopilot, manual trim, and pilot training assumptions in ways no single team fully understood

- Tight coupling: Once MCAS activated, pilots had approximately 40 seconds to diagnose and disable the system before aerodynamic forces made manual trim impossible

- Documentation failure: Pilots weren't informed MCAS existed, because Boeing classified it as a minor software update

The system contained 14 million lines of code, managed by 1,000+ engineers across multiple contractors, certified by FAA personnel who had never seen the full system architecture.

The Mathematics of Surprise

Why do we keep building systems that guarantee surprise? The answer lies in a fundamental mismatch between human cognition and fat-tailed reality.

Engineers are trained to think in terms of expected value:

$$E[V] = \sum_{i} p_i \cdot v_i$$

But for fat-tailed distributions, expected value calculations fail catastrophically. The mean of a power-law distribution with $\alpha \leq 2$ is technically infinite — the expected value of losses grows without bound as you collect more data.

This means that traditional risk matrices (probability × impact) systematically underestimate tail risk. A "1% chance of $100 million loss" looks very different from a "0.01% chance of a $100 billion loss" — even though they have the same expected value. One is manageable. The other destroys companies (and, in Fukushima's case, countries).

“"In a complex system, the worst-case scenario is not 'very unlikely.' It is simply the scenario you haven't imagined yet.”

The Prevention Paradox

Here's the trap: successful risk prevention makes future risk prevention harder. When NASA fixed O-ring erosion problems post-Challenger, the organization concluded the problem was solved. When Boeing updated MCAS software after Lion Air Flight 610, they assumed the system was safe.

But in complex systems, fixing one failure mode often creates new ones:

- Added safety features increase system complexity

- Increased complexity creates new interaction effects

- New interactions generate novel failure modes

This is why the aviation industry's safety record appears to improve continuously while black swan events remain constant. We're getting better at preventing the failures we've seen. We're not getting better at preventing the failures we haven't.

Implications for Engineering Practice

If normal accidents are mathematically inevitable in complex, tightly coupled systems, what should engineers do? Three principles emerge:

1. Design for Failure, Not Against It

Assume catastrophic failure will occur. Design systems that fail gracefully, with:

- Decoupling: Build in time delays and buffers that allow human intervention

- Redundancy: Independent backup systems, not shared-point-of-failure redundancy

- Simplification: Fewer components mean exponentially fewer interactions

2. Embrace Epistemic Humility

Fat-tailed distributions mean your worst historical event is probably not your worst possible event. Risk models should include:

- Unknown unknowns: Budget 30-50% contingency for "undefined risks" in megaprojects

- Model uncertainty: Treat probability estimates as distributions, not point values

- Scenario planning: Run exercises asking "what if everything fails simultaneously?"

3. Flatten Hierarchies of Warning

The Challenger pattern repeats because organizations structure warning signals to pass through bottlenecks. Design systems where:

- Any engineer can halt operations with a single objection

- Warning memos cannot be "managed away" before reaching decision-makers

- Near-miss events trigger automatic investigation, not discretionary review

“[!NOTE] The aviation industry's "just culture" model”

Sources: Perrow, C. (1984). Normal Accidents: Living with High-Risk Technologies. Princeton University Press. | Taleb, N.N. (2007). The Black Swan: The Impact of the Highly Improbable. Random House. | Flyvbjerg, B. (2014). What You Should Know About Megaprojects and Why: An Overview. Project Management Journal. | Cook, R.I. (1998). How Complex Systems Fail. Cognitive Technologies Laboratory. | Rogers Commission Report (1986). Report of the Presidential Commission on the Space Shuttle Challenger Accident. | National Diet of Japan (2012). The Official Report of the Fukushima Nuclear Accident Independent Investigation Commission.

This is a Premium Article

Hylē Media members get unlimited access to all premium content. Sign up free — no credit card required.