The Carbon Cost of Your Recommendation Algorithm

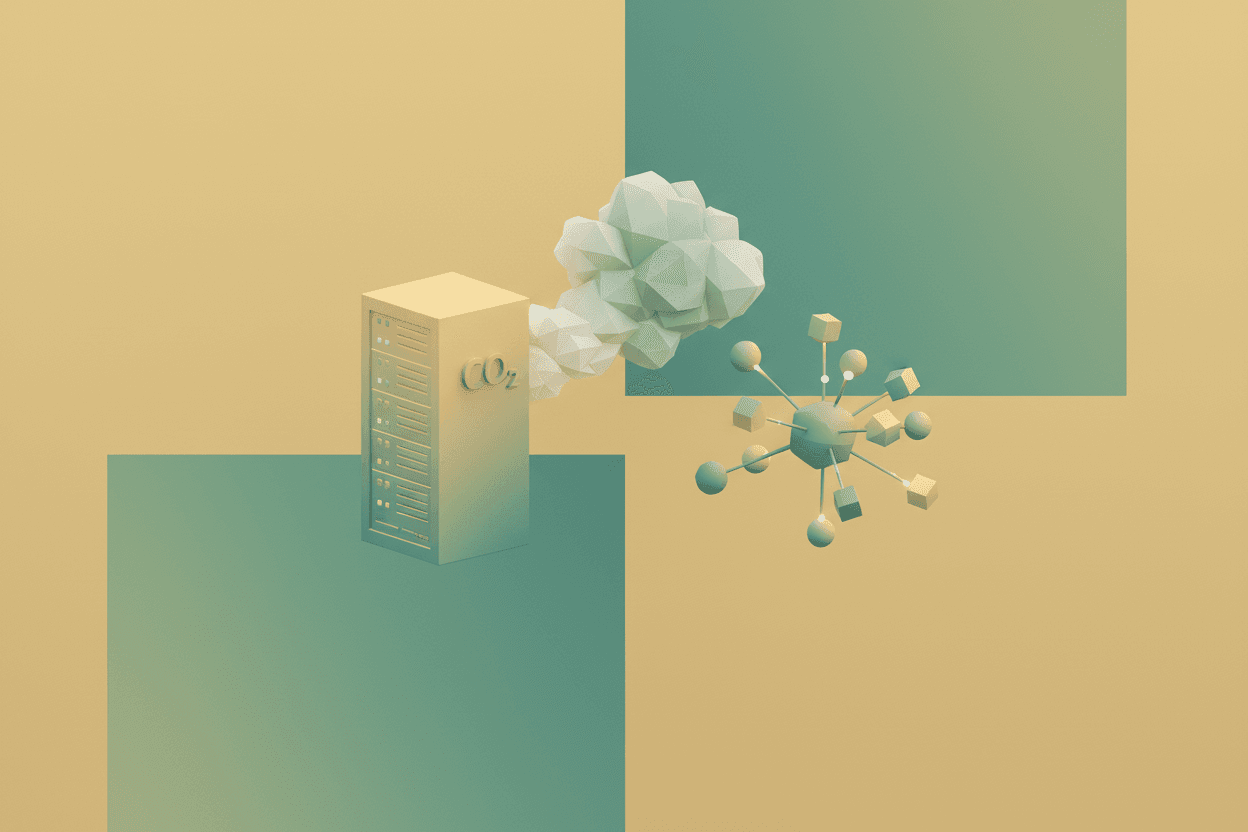

Training one large AI model emits 300 tons of CO₂. As data centers approach 8% of global power by 2026, your Netflix binge carries a hidden climate price.

The Invisible Exhaust Pipe of the Digital Age

Training a single large AI model emits roughly 300 tons of CO₂ — equivalent to 125 round-trip flights from New York to Beijing. Every ChatGPT query uses 10x the energy of a Google search. Behind every personalized recommendation, every conversational AI response, and every predictive text suggestion lies an invisible infrastructure consuming staggering amounts of electricity and water.

The numbers are escalating rapidly. In 2022, data centers consumed approximately 1-1.5% of global electricity. By 2026, the International Energy Agency projects that figure could reach 4-8% — a jump driven primarily by AI workloads. The carbon footprint of training GPT-3 alone equals the lifetime emissions of five automobiles, and that was before we started deploying models with trillions of parameters.

But here's what makes this crisis particularly insidious: the environmental cost is abstracted away, hidden behind sleek interfaces and instant responses. When you ask an AI to summarize a document, there's no smokestack, no visible exhaust. So how do we quantify — and more importantly, mitigate — the environmental debt accumulating with every query?

Anatomy of an AI Carbon Footprint

Training: The Front-Loaded Emission Bomb

The carbon cost of AI breaks down into two phases: training and inference. Training dominates the initial footprint. GPT-3's training run consumed an estimated 1,287 MWh of electricity. Using the average US grid carbon intensity of 0.423 kg CO₂/kWh, that translates to approximately 552 tons of CO₂ — though researchers at the University of Massachusetts Amherst calculated even higher figures when accounting for full lifecycle emissions.

The formula for training emissions is deceptively simple:

$$E_{training} = P_{cluster} \times T_{training} \times C_{grid}$$

Where $P_{cluster}$ is power consumption (in kW), $T_{training}$ is training time (in hours), and $C_{grid}$ is the carbon intensity of the local electricity grid (kg CO₂/kWh). Running the same workload in Iceland (hydroelectric grid, ~0.01 kg CO₂/kWh) versus West Virginia (coal-heavy, ~0.80 kg CO₂/kWh) changes emissions by a factor of 80.

[!INSIGHT] Location is the single largest controllable factor in AI carbon footprint. A model trained in a coal-powered region can have 80x the emissions of one trained using renewable energy, making data center siting decisions climate-critical.

Inference: The Accumulating Debt

Training happens once (or periodically). Inference happens millions of times daily. While a single ChatGPT query consumes only about 0.002-0.01 kWh — seemingly negligible — at scale, this compounds dramatically. OpenAI serves an estimated 100 million weekly users. If each user makes just 5 queries daily, that's 500 million queries per week, or 26 billion annually.

At 0.004 kWh per query and 0.423 kg CO₂/kWh:

$$E_{annual} = 26 \times 10^9 \times 0.004 \text{ kWh} \times 0.423 \text{ kg/kWh} = 44 \text{ million tons CO}_2$$

That's roughly equivalent to the annual emissions of 9.6 million cars — from a single AI service.

“*"The inference phase is where good intentions go to die. Everyone optimizes training because it's a one-time cost you can measure. Inference is distributed, continuous, and invisible”

The Hidden Water Crisis

Cooling the Cognitive Engines

Carbon is only half the story. Training GPT-3 consumed an estimated 700,000 liters of clean freshwater for cooling — enough to fill 280 Olympic swimming pools. Microsoft's 2023 environmental report revealed a 34% spike in water consumption (nearly 1.7 billion gallons) coinciding with its AI expansion, largely attributed to data center cooling demands.

Modern AI chips (GPUs and TPUs) generate tremendous heat. A single NVIDIA H100 GPU can draw 700W at peak load. A training cluster with 10,000 such GPUs generates 7 megawatts of heat — equivalent to 7,000 space heaters running simultaneously. Two primary cooling approaches exist:

- Air cooling: Energy-intensive fans and HVAC systems, raising Power Usage Effectiveness (PUE) ratios

- Liquid cooling: More efficient but requires enormous water volumes, creating a water-energy tradeoff

The water consumption formula for evaporative cooling:

$$W_{cooling} = \frac{Q_{heat}}{h_{vap} \times \eta_{cool}}$$

Where $Q_{heat}$ is heat load (kJ), $h_{vap}$ is latent heat of vaporization (~2,260 kJ/kg), and $\eta_{cool}$ is cooling efficiency. For every 1 kWh of computation, approximately 1.8-3.8 liters of water may be consumed in evaporative cooling systems.

Regional Water Stress Amplification

Data centers don't just consume water; they consume it in locations already facing scarcity. Arizona, Texas, and Singapore — major data center hubs — rank among the most water-stressed regions globally. In 2023, a Microsoft data center in Arizona drew criticism for consuming 56 million gallons of groundwater while local aquifers depleted at alarming rates.

[!NOTE] The water-energy nexus creates compounding environmental pressures. As climate change intensifies drought conditions, the same regions attracting data centers for their cheap land and renewable energy potential often face the greatest water scarcity, turning AI growth into a direct competition with agricultural and residential water needs.

The Recommendation Algorithm Paradox

Personalization's Pollution Problem

Your Netflix recommendation engine, Spotify's Discover Weekly, Amazon's "customers who bought this" suggestions — all run on continuous inference from massive matrix factorization and neural collaborative filtering models. These systems operate 24/7, processing billions of user-item interactions.

A 2022 study estimated that YouTube's recommendation system alone consumes 600-1,000 GWh annually — more than some small countries. The perverse irony? These systems optimize for engagement, not efficiency. A/B testing infrastructure runs multiple model variants simultaneously, multiplying computational overhead.

Consider the recommendation inference cost:

$$C_{rec} = N_{users} \times N_{items} \times d_{embedding} \times F_{similarity}$$

For a platform with 500 million users and 10 million items, even sparse matrix operations require substantial computation. Every scroll through TikTok's "For You" page triggers dozens of inference calls. Multiply this across billions of daily active users, and recommendation systems become significant carbon contributors operating under the radar of environmental scrutiny.

Pathways to Sustainable AI

Technical Mitigations

Several approaches show promise for reducing AI's environmental impact:

-

Model Efficiency: Distillation, quantization, and sparse architectures can reduce inference costs by 10-100x. GPT-4-Turbo demonstrates that smaller, optimized models can match larger predecessors while consuming a fraction of the compute.

-

Carbon-Aware Computing: Scheduling training workloads during periods of high renewable energy availability (sunny/windy periods) or in low-carbon regions can reduce emissions by 40-60%.

-

Hardware Innovation: Neuromorphic chips and optical computing promise orders-of-magnitude efficiency improvements, though they remain experimental for large language models.

-

Caching and Reuse: Many AI queries are repetitive. Intelligent caching systems can serve common requests without re-running inference, though this raises accuracy tradeoffs.

The Measurement Challenge

The AI industry lacks standardized environmental reporting. Companies disclose selective metrics (PUE, renewable energy percentages) while obscuring absolute consumption. The EU AI Act's forthcoming environmental requirements may force transparency, but current methodologies remain inconsistent.

“*"We're asking companies to report carbon emissions for systems they don't fully understand themselves. The black box nature of deep learning extends to its environmental footprint”

[!INSIGHT] Transparency mechanisms are emerging: ML CO2 Impact and CodeCarbon tools allow researchers to estimate emissions in real-time. However, voluntary adoption remains limited, and inference-phase tracking is virtually non-existent in production environments.

Implications: The True Cost of "Free" AI

The environmental externalities of AI represent a massive market failure. Users pay nothing per query; companies bear infrastructure costs but don't price in environmental damage; society inherits the climate consequences. This creates a tragedy of the commons dynamics where competitive pressure drives ever-larger models without regard for aggregate impact.

The parallel to the fossil fuel industry is uncomfortable but apt. Just as gasoline prices don't reflect climate costs, AI API pricing excludes environmental damage. A carbon tax on compute — theoretically elegant but politically fraught — would fundamentally reshape AI economics. At $50/ton CO₂, a GPT-4 query might cost an additional $0.001-0.005 in carbon fees alone.

Regulatory momentum is building. The EU's Corporate Sustainability Reporting Directive requires detailed environmental disclosures from 2025. The SEC's climate disclosure rules (though contested) would mandate Scope 3 emissions reporting, potentially forcing tech companies to account for the carbon footprint of their AI products throughout the value chain.

Sources: Strubell et al. (2019) "Energy and Policy Considerations for Deep Learning in NLP"; Patterson et al. (2021) "Carbon Emissions and Large Neural Network Training"; International Energy Agency (2024) "Electricity 2024"; Li et al. (2023) "Making AI Less 'Thirsty'"; Microsoft 2023 Environmental Sustainability Report; OpenAI technical reports; NVIDIA specifications; Allen Institute for AI research publications.