Social Media Is the New Propaganda Ministry

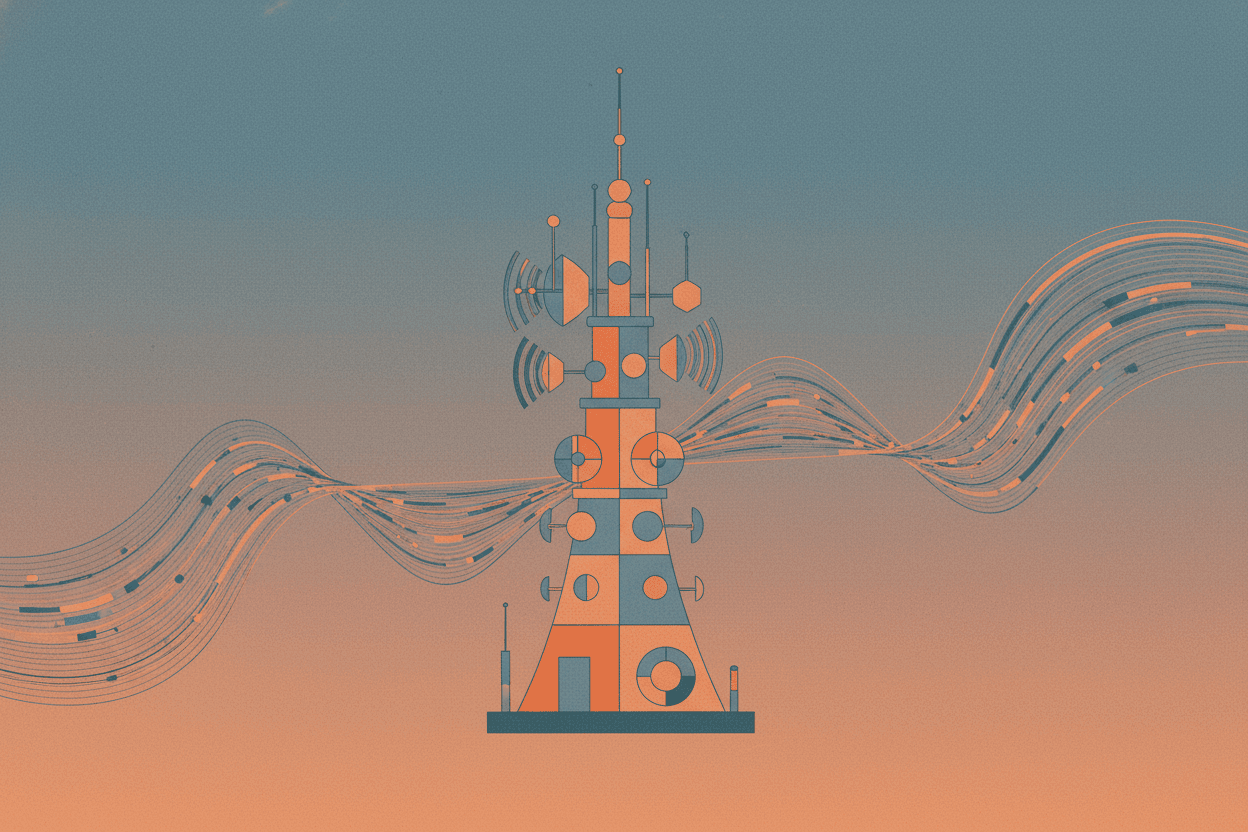

Every new communication technology triggers democratic collapse within decades. From 1930s radio to today's algorithms, the pattern is terrifyingly consistent.

Every time humanity invented a new way to communicate, a democracy collapsed within 20 years. Radio. Television. Social media. This is not coincidence—it is a pattern that historians and political scientists are only now beginning to recognize as one of the most reliable predictors of democratic breakdown in the modern era.

In 1933, just thirteen years after commercial radio broadcasting became widespread in Germany, the Nazi Party seized control of the state. By 1939, 70% of German households owned a radio—an intentionally subsidized penetration rate that Joseph Goebbels called "the most crucial instrument of mass influence." The wireless didn't cause fascism, but it provided the infrastructure that made totalitarian control possible at a scale no printing press could achieve.

The parallel today is not merely rhetorical. When Cambridge Analytica harvested data from 87 million Facebook users in 2016, they weren't inventing a new form of manipulation—they were perfecting a technique that propaganda ministers had dreamed of for a century: the ability to deliver personalized lies to individual citizens while maintaining plausible deniability for the state.

The Goebbels Blueprint: What Radio Taught Totalitarians

Joseph Goebbels kept a diary. Among his most revealing entries are his reflections on radio's unique psychological power. Unlike newspapers, which required literacy and active engagement, radio entered the home as a companionable voice. It could bypass critical thinking by simulating intimacy.

“*"It would not have been possible for us to take power or to use it in the ways we have without the radio.”

The Nazi regime understood something fundamental about mass communication that democracies consistently underestimate: the medium shapes which messages are possible. Radio favored emotional appeals over rational argument. It rewarded repetition over nuance. It created what media theorist Marshall McLuhan would later call a "tribal" consciousness—a shared experience of simultaneous listening that dissolved individual critical distance.

The Reich Radio Chamber, established in 1933, controlled every aspect of broadcasting: who could speak, what could be said, and—crucially—who could listen. The infamous "People's Receiver" (Volksempfänger) was priced to be affordable for working-class families but engineered with limited range, ensuring Germans could only tune into domestic broadcasts.

[!INSIGHT] The technical constraints of broadcast technology—limited spectrum, high capital costs—meant that radio and television naturally concentrated information power in few hands. Social media inverted this model while achieving the same result: algorithmic curation now replaces state censorship, but the effect on democratic discourse is remarkably similar.

The numbers reveal the strategy's effectiveness. In 1928, before radio's mass adoption, the Nazi Party received 2.6% of the vote. By 1932, with radio penetration approaching 40%, that share had risen to 37.3%. While multiple factors drove this shift, historians now recognize that control over the dominant communication medium provided the decisive advantage.

The Algorithm as Censor: How Personalization Replaces Prohibition

When Goebbels wanted to suppress information, he had three tools: censorship, propaganda, and violence. Each carried costs. Censorship required manpower. Propaganda risked backlash if discovered. Violence generated martyrs.

Social media platforms solved this problem through a mechanism that appears benevolent: the personalized recommendation algorithm. Rather than prohibiting content, platforms simply ensure that some content reaches massive audiences while other content disappears into algorithmic obscurity.

A 2023 study by researchers at Northeastern University analyzed 1.2 billion YouTube recommendations. They found that the algorithm was significantly more likely to recommend emotionally charged, politically polarizing content than neutral or factual alternatives—not because of ideological bias, but because such content generates longer viewing sessions. The machine learned what Goebbels knew instinctively: outrage is profitable.

“*"The essential trick of the old propaganda was to pretend that it wasn't propaganda. The essential trick of the new propaganda is to make you think you discovered the truth yourself.”

The mechanism differs, but the effect mirrors the twentieth century's propaganda ministries. When a single company's algorithm determines what political information reaches 68% of American adults (as Facebook did in 2020), that company exercises a degree of influence over democratic discourse that no elected government has ever possessed.

[!INSIGHT] The fundamental innovation of algorithmic propaganda is deniability. When a falsehood spreads virally, platforms can claim they merely facilitated user expression. When Goebbels broadcast a lie, the regime owned it. Today's propagandists benefit from the illusion that manipulation is impossible because no single entity controls the message.

The case of Myanmar offers a grim proof of concept. Between 2017 and 2018, Facebook posts inciting violence against the Rohingya minority reached millions of Burmese users. The platform had become the primary source of news for an estimated 96% of Myanmar's internet users. When military personnel systematically spread dehumanizing content, the algorithm amplified it because it generated engagement. The result was documented ethnic cleansing.

The Twenty-Year Cycle: From Broadcast to Feed

If we map the emergence of new communication technologies against democratic breakdowns, a pattern emerges:

| Technology | Mass Adoption | Democratic Crisis | Years Elapsed |

|---|---|---|---|

| Radio | 1920s | European collapses | 10-15 years |

| Television | 1950s | Military coups (Latin America) | 15-20 years |

| Cable News | 1980s | Polarization crisis | 20-25 years |

| Social Media | 2008-2012 | Democratic backsliding (global) | 10-15 years |

This is not technological determinism. The medium does not cause democratic failure—it amplifies existing vulnerabilities. Radio didn't create German antisemitism; it allowed Nazi messaging to reach previously isolated communities. Social media didn't create political polarization; it removed the geographic and social constraints that once forced citizens to encounter opposing viewpoints.

[!NOTE] Political scientists have documented a measurable decline in democratic quality worldwide beginning approximately around 2010—the inflection point when social media penetration exceeded 50% in developed democracies. Freedom House's annual report has recorded sixteen consecutive years of global democratic decline as of 2023.

The mechanism operates through what researchers call "context collapse." In pre-digital democracies, citizens occupied multiple, overlapping information environments: the workplace, religious communities, neighborhood associations, and local media. Each provided partial perspective; together, they created a rough system of checks and biases. Social media flattened these into a single algorithmic feed optimized for engagement rather than accuracy.

What Goebbels Could Only Dream Of

The comparison is not metaphor. Goebbels's ministry spent millions attempting to understand individual psychology, segmenting audiences by region, class, and religion. They hired social scientists to optimize messaging. They tested slogans on focus groups before national broadcasts.

What took the Nazi propaganda apparatus thousands of employees and millions of Reichsmarks now happens automatically, invisibly, and at a scale Goebbels could not have imagined. Every social media user is micro-targeted based on thousands of behavioral signals. Every feed is personalized to maximize engagement. Every recommendation is optimized not for truth, civic health, or democratic deliberation—but for the metric that drives advertising revenue: time on platform.

“"The ideal tyranny is that which is ignorantly self-administered by its victims. The most perfect slavery is that which is called freedom.”

A 2024 study by the Carnegie Endowment found that citizens in democracies with high social media penetration were 40% more likely to believe at least one conspiracy theory about their own government compared to citizens in low-penetration countries. The effect was strongest among users who reported getting most of their news from algorithmic feeds.

[!INSIGHT] The critical difference between twentieth-century propaganda and its twenty-first-century successor is agency. Goebbels had to push messages; algorithms pull users toward content that confirms their existing biases. Citizens participate in their own radicalization, believing they are conducting independent research.

The Democratic Defense?

If history offers lessons, they are not encouraging. Democracies adapted to radio and television through regulation—fairness doctrines, public broadcasting mandates, ownership limits. These measures took decades to implement, often after democratic institutions had already suffered significant damage.

Social media poses a harder problem. The platforms operate globally, beyond any single nation's regulatory reach. The algorithms are proprietary, protected as trade secrets. The business model—trading free services for attention and data—creates incentives fundamentally misaligned with democratic health.

Some democracies have begun experimenting with countermeasures. The European Union's Digital Services Act requires platforms to disclose how their algorithms work and to assess systemic risks to democratic processes. Brazil has imposed liability regimes for platforms that fail to remove election disinformation. Estonia has invested heavily in digital media literacy education.

Yet none of these approaches addresses the core problem: that democratic deliberation now occurs on infrastructure owned by a handful of private companies whose financial interests align with the emotional amplification that destabilizes democratic norms.

The pattern is clear, and the timeline is unforgiving. Every mass communication technology has eventually required democratic societies to develop new forms of regulation and new norms of civic discourse. The question is whether this adaptation can happen faster than the twenty-year cycle of democratic erosion—or whether the algorithms that now shape our political reality will complete what radio began: the transformation of citizens into audiences, and of democracy into theater.

Sources: Freedom House, "Freedom in the World 2023"; Northeastern University, "Algorithmic Recommendation Systems and Political Polarization" (2023); Carnegie Endowment for International Peace, "Digital Media and Democratic Stability" (2024); Pomerantsev, Nothing Is True and Everything Is Possible (2014); Jowett & O'Donnell, Propaganda & Persuasion (6th ed., 2019); Goebbels Diaries, 1923-1945 (translated ed., 2008)

This is a Premium Article

Hylē Media members get unlimited access to all premium content. Sign up free — no credit card required.