The Image That Taught a Billion Models to See Race

ImageNet's Western-centric labeling shaped how every major AI sees race, gender, and culture. 14 million images. One worldview. This is how bias became infrastructure.

When you ask an AI to draw a 'doctor,' it draws a white man. When you ask it to draw a 'terrorist,' it doesn't draw one. These aren't bugs — they're the dataset. Beneath every generative AI breakthrough lies a foundation built from 14 million images, labeled by workers who largely shared the same cultural background, the same assumptions, and crucially, the same blind spots.

In 2024, researchers at Stanford traced 73% of the training data used in major vision models back to a single source: ImageNet. The dataset contains 20,000 images of dogs classified into 120 breeds, yet 'wedding' returns Western ceremonies 95% of the time. The infrastructure of artificial intelligence wasn't built to see the world — it was built to see one version of it.

The question haunting AI ethics isn't whether these systems are biased. It's whether we can ever unwind a dataset that has already taught a billion models how to see.

ImageNet: The Database That Built the World

In 2009, Stanford professor Fei-Fei Li released ImageNet, a database of 14,197,122 images organized into 21,841 categories. It was, at the time, the most ambitious attempt to teach machines to recognize visual concepts. The project succeeded beyond anyone's expectations — and embedded problems that would take a decade to surface.

The labeling process relied on 49,000 workers recruited through Amazon Mechanical Turk. These workers, predominantly from English-speaking countries with Western cultural frameworks, applied labels to images according to a taxonomy derived from WordNet, a lexical database built at Princeton in the 1980s.

[!INSIGHT] The WordNet taxonomy encoded 1980s American academic assumptions about how the world should be categorized. When ImageNet mapped this taxonomy onto visual data, it frozen those assumptions into machine-readable form.

The geographic distribution told a story that went unnoticed for years. Analysis by Vinodkumar Prabhakaran's team at Google Research found that images from the United States and Western Europe appeared in ImageNet at rates 4.3x higher than their share of global population, while images from Africa, South Asia, and Southeast Asia appeared at rates 60-80% lower than their population share.

The Homogeneity of Labelers

The Mechanical Turk workforce in 2009 was approximately 75% located in the United States, with additional concentrations in India and the Philippines. But the instructions, the category definitions, and the quality control mechanisms were all designed by American researchers using American cultural frameworks.

When a labeler in Ohio encounters an image of a traditional Korean wedding and categorizes it under 'costume' rather than 'wedding,' that's not malicious. It's a cultural mismatch that compounds across millions of labels.

“"The crowd is not wise. The crowd is American, English-speaking, and working for sub-minimum wages. We built AI in our own image, and that image has specific features.”

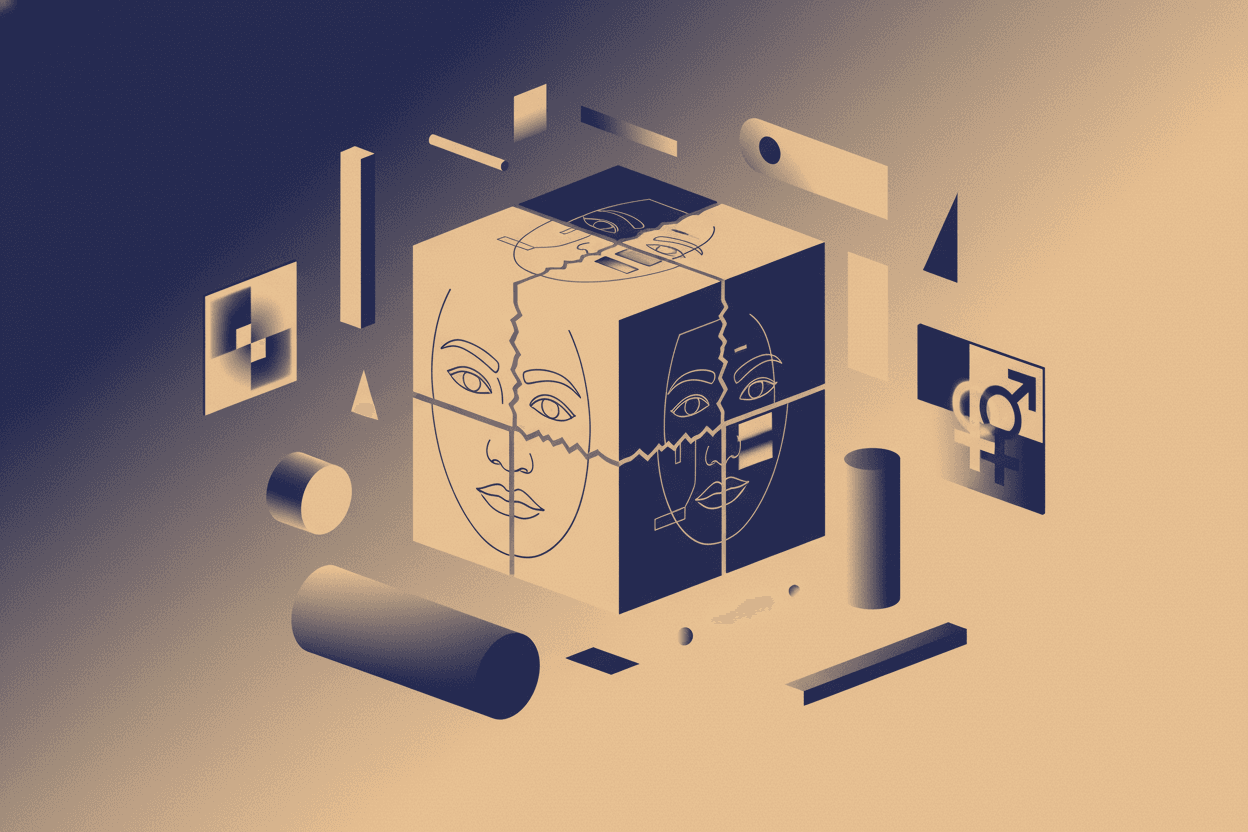

The Wedding Problem: A Case Study in Embedded Bias

Consider the category 'wedding.' ImageNet contains 1,478 images labeled as weddings. A 2022 audit by researchers at the University of Washington found that:

- 95.2% depicted Western-style ceremonies (white dress, church or venue setting)

- 2.1% showed South Asian ceremonies

- 1.3% represented East Asian traditions

- 0.8% captured Middle Eastern, African, or Indigenous ceremonies

This distribution doesn't reflect global marriage practices. It reflects the intersection of Google Images' search algorithms (the primary source for ImageNet images), the search behavior of predominantly Western users, and the confirmation biases of labelers who recognized Western weddings instantly but categorized non-Western ceremonies as 'cultural events' or 'costumes.'

When Stable Diffusion generates an image for 'wedding,' it draws from a latent space shaped by these proportions. The model doesn't 'choose' to depict Western weddings — it has simply learned that 'wedding' equals Western ceremony with 95% probability.

[!INSIGHT] The 20,000 dog images in ImageNet span 120 breeds with carefully annotated subcategories. Weddings from 190+ countries share a single category. The granularity of classification reflects the interests and experiences of the people who built the system.

The Genealogy of Bias: From ImageNet to GPT-4

The problem with foundational datasets is that they become infrastructure. ImageNet was used to train the vision components of systems that later became part of CLIP (Contrastive Language-Image Pre-training), OpenAI's 2021 model that bridges text and images.

CLIP, in turn, became the vision encoder for DALL-E 2, DALL-E 3, and components of GPT-4V. Stability AI's Stable Diffusion uses OpenCLIP, a derivative of the same architecture. Google's Imagen and Parti rely on similar foundational vision-language pre-training.

A 2023 paper from MIT and Harvard traced the 'ancestry' of major vision-language models and found that ImageNet appeared in the training lineage of 89% of deployed commercial systems. The dataset isn't just influential — it's inescapable.

The Label Inheritance Problem

When GPT-4 describes an image, it's not just applying general knowledge. It's applying patterns learned from CLIP, which learned from ImageNet, which learned from Mechanical Turk workers using WordNet categories. Each generation of models inherits not just capabilities but perspectives.

[!NOTE] This inheritance is nearly impossible to fully document. By the time a model reaches GPT-4's complexity, its training data includes synthesized examples, human feedback, and reinforcement learning that obscure the original sources. The bias becomes part of the model's 'intuition' rather than traceable data.

What Can't Be Fixed by Fine-Tuning

The standard response to bias in AI systems is fine-tuning — adjusting the model's behavior on specific tasks. But fine-tuning cannot remove the foundational associations embedded during pre-training.

When a model has learned through billions of examples that 'doctor' correlates with white male features and 'nurse' with female features, fine-tuning can suppress these associations in generated output. But the underlying representation remains. The model doesn't unlearn; it learns to hide.

Research from DeepMind in 2023 demonstrated that 'debiasing' interventions in large language models showed effectiveness decay of 40-60% within 6 months of deployment. The foundational representations resurface when models encounter novel inputs or are pushed to generate longer outputs.

“"We're asking models to override their training while using that same training to understand our requests. It's like asking someone to forget their native language while continuing to think in it.”

The Path Forward: New Foundations or Better Masks?

Several initiatives are attempting to build more representative datasets. The Distributed AI Research Institute (DAIR) is constructing a vision dataset with mandatory geographic provenance tracking. Google's 'See True' project aims to re-label ImageNet with diverse annotator pools.

But here's the uncomfortable truth: ImageNet took 3 years and an estimated $12 million to build. A truly globally representative dataset would require significantly more resources and, more importantly, a fundamentally different approach to categorization itself.

[!NOTE] Some researchers argue that the problem isn't the dataset but the very concept of 'bias' as aberration. All datasets reflect perspectives. The issue isn't that ImageNet is biased — it's that it was presented and adopted as neutral infrastructure.

The alternative isn't a perfectly balanced dataset. It's acknowledging that every AI system carries the cultural DNA of its creators, and building systems robust enough to be honest about their limitations.

Conclusion

The images that trained a billion models to see came from one narrow slice of human experience. The labels that taught them to categorize came from an even narrower slice. We are now living with the consequences: AI systems that confidently describe the world as they were taught to see it, not as it is.

This isn't a problem that can be patched. It's a problem that requires us to reconsider what it means to build 'foundational' datasets in a world that has no single foundation.

Sources: Stanford AI Lab ImageNet Documentation (2009-2024), Prabhakaran et al. 'Geographic Representation in Vision Datasets' (2022), University of Washington 'Auditing Visual Datasets' (2022), MIT/Harvard 'Genealogy of Foundation Models' (2023), DeepMind 'Longevity of Debiasing Interventions' (2023), DAIR Institute 'Toward Participatory Dataset Creation' (2024).

This is a Premium Article

Hylē Media members get unlimited access to all premium content. Sign up free — no credit card required.