The First Deepfake Was Made in 1997 — By MIT

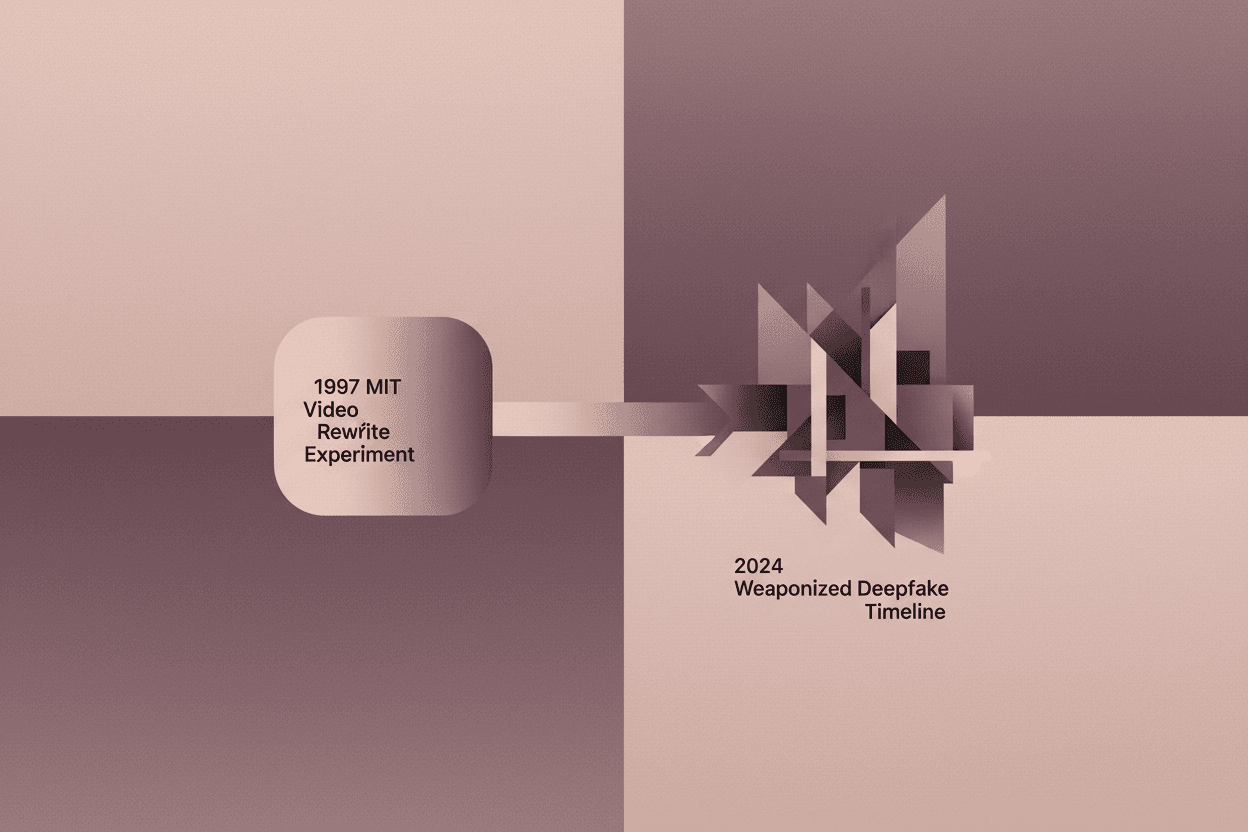

In 1997, MIT researchers invented deepfake technology as an academic experiment. Twenty-seven years later, that same code is undermining elections worldwide.

From Academic Curiosity to Global Threat

The technology behind today's election-disrupting deepfakes was invented at MIT in 1997 as an academic exercise. The researchers published it openly. They had no idea what they'd started.

The paper was called "Video Rewrite" and it appeared in SIGGRAPH '97 with a mundane abstract about "automatically labeling facial features." The system could take existing footage of a person and synthesize new lip movements synchronized to any audio track. Christy Turlington's face was manipulated to say words she never spoke. The researchers considered it a breakthrough in computer graphics. They released the code, documented the algorithms, and moved on to their next project.

The Architecture of Accidental Destruction

The MIT Media Lab team, led by researcher Christoph Bregler, wasn't trying to build a disinformation weapon. Their goal was surprisingly innocent: automate the tedious process of dubbing foreign films. Traditional dubbing required reshoots or awkward voiceovers. Video Rewrite offered a third path — make the actor appear to speak any language naturally.

The technical approach was elegant in its simplicity. The system analyzed phonemes (the smallest units of sound in speech) and mapped them to visemes (the corresponding mouth shapes). By building a database of a person's facial movements, it could reassemble new combinations that matched any audio input.

[!INSIGHT] The core algorithm that powers modern deepfakes — mapping audio features to facial landmarks and synthesizing intermediate frames — was essentially complete in 1997. What took 27 years wasn't invention, but democratization.

What Changed Between Then and Now

Three factors transformed Video Rewrite from lab curiosity to global threat:

-

Computational Cost: In 1997, generating one second of manipulated video required hours of processing on expensive workstations. Today, a consumer GPU can render convincing deepfakes in real-time.

-

Training Data: The MIT team needed carefully controlled footage of their subject. Modern neural networks can train on hours of grainy YouTube videos and produce superior results.

-

Distribution: A 1997 deepfake existed on a hard drive in Cambridge. A 2024 deepfake reaches 100 million viewers in seventeen minutes.

“*"We thought we were solving a problem for filmmakers. The idea that someone would use this to fake a politician's speech”

The Intention Gap

The distance between what technologists intend and what society receives has never been wider. The Video Rewrite team followed every ethical norm of their era: they published openly, credited their sources, and demonstrated their work on consenting celebrities. They assumed that the difficulty of the technique would limit its misuse.

This assumption — that technical complexity provides natural guardrails — remains one of the most persistent fallacies in computer science. Every major platform for synthetic media today traces its lineage to academic papers that were originally published with the best intentions.

[!NOTE] A 2023 study by the Stanford Internet Observatory found that 78% of viral political deepfakes used techniques directly descended from three academic papers published between 1997 and 2014. None of the original researchers were consulted or credited.

The Election Interference Pattern

The 2024 election cycle became the first widespread deployment of deepfake technology at scale:

-

Slovakia (2023): A fake audio recording of the progressive candidate claiming he rigged the election spread 72 hours before voting. The clip was created using open-source voice cloning tools.

-

United States (2024): AI-generated robocalls impersonating President Biden urged voters in New Hampshire to skip the primary. The caller used a voice model trained on 30 seconds of original audio.

-

Taiwan (2024): Deepfake videos appeared showing candidates conceding defeat before polls closed. The videos were debunked within hours but still reached an estimated 12 million viewers.

Each of these attacks used the fundamental architecture that MIT researchers sketched out in 1997: audio-driven facial manipulation, phoneme-to-viseme mapping, and synthesis of novel utterances.

The Open-Source Paradox

The academic tradition of open publication created the deepfake crisis, but closing research isn't the answer. The algorithms are already public. The expertise is distributed globally. The genie doesn't go back in the bottle.

What the Video Rewrite story actually reveals is a failure of imagination. The researchers didn't ask the most important question: not "what can this do?" but "what would happen if everyone could do this?"

“*"The harm wasn't in publishing the research. The harm was in publishing the research without simultaneously developing the defenses against it.”

Implications for the Coming Decade

We are now in the exponential phase of synthetic media. The Video Rewrite paper described a system that took weeks to build and hours to run. Modern equivalents are free, instant, and increasingly undetectable.

The defensive technologies — watermarking, provenance tracking, detection algorithms — are running years behind the offensive capabilities. Every breakthrough in detection seems to be answered within months by more sophisticated generation techniques.

[!INSIGHT] The 27-year gap between Video Rewrite and widespread deepfake deployment wasn't a grace period — it was a missed opportunity. The research community had nearly three decades to develop robust authentication standards before the threat materialized. They didn't.

Sources: Bregler, C., Covell, M., & Slaney, M. (1997). Video Rewrite: Driving Visual Speech with Audio. SIGGRAPH '97; Stanford Internet Observatory (2023). Genealogy of Synthetic Media Techniques; Carnegie Endowment for International Peace (2024). Deepfakes and Election Interference: A Global Assessment.