The Algorithm That Fired 900 People Without Warning

In 2021, an AI system recommended firing 900 workers based on financial data. Managers approved it without review. Welcome to automated termination.

Nine hundred people found out they were laid off via email. The decision was made by an algorithm in seconds. The manager signed off without reading the files. This is now standard practice.

In March 2021, Katerra, a Silicon Valley construction startup once valued at $4 billion, conducted one of the most impersonal mass layoffs in corporate history. An HR platform analyzed financial metrics, identified 900 positions as "redundant," and generated termination recommendations. Senior leadership approved the batch without individual review. The affected employees received identical emails informing them their employment had ended. No conversations. No explanations. No appeals.

Here is the uncomfortable truth: this was not a malfunction. The system worked exactly as designed. And similar algorithms are now embedded in workforce management platforms at hundreds of companies worldwide.

The Architecture of Automated Termination

The Katerra case exposed a disturbing evolution in human resources technology. Modern HR platforms do not merely track payroll or manage benefits. They actively evaluate employee "value" against real-time financial metrics and can recommend termination when the numbers fall below thresholds.

According to internal documents reviewed after Katerra's bankruptcy, the company had integrated an AI-driven workforce optimization tool that continuously monitored project profitability, labor costs, and revenue per employee. When the algorithm detected a sustained negative variance, it automatically generated layoff lists grouped by department, project, and region.

“[!INSIGHT] The critical innovation was not the analysis itself”

The managers who signed off on the 900 terminations later testified in bankruptcy proceedings that they assumed the algorithm had identified underperforming employees. In reality, the system had flagged entire project teams based purely on project-level financial data, regardless of individual performance metrics.

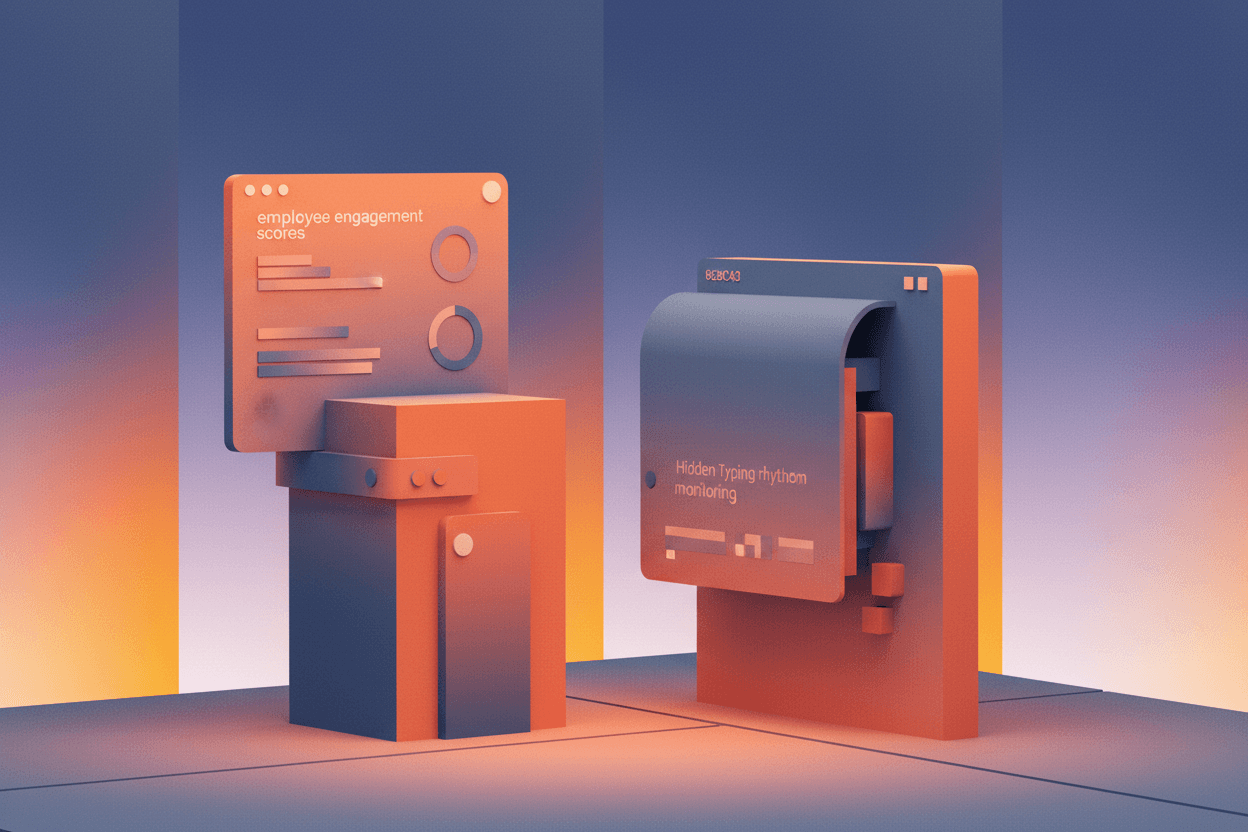

The IBM Patent That Predicted This Future

In 2018, IBM filed a patent application for an AI system that could "predict" which employees were likely to leave their jobs and recommend interventions—or terminations. The patent, numbered US10,394,858 B1, described algorithms that analyze communication patterns, calendar usage, and productivity metrics to generate "flight risk" scores.

While IBM stated the technology was designed for retention strategies, employment attorneys noted the same infrastructure could be repurposed for proactive termination recommendations. The patent explicitly mentioned using machine learning to identify "employees who may benefit from being transitioned out of the organization."

“*"The legal framework has not caught up. We are seeing algorithms make decisions that would require extensive documentation and justification if a human manager made them. But because the decision is framed as a 'recommendation' that a human approves, companies argue no discrimination occurred.”

The IBM patent represented a watershed moment: major technology companies were publicly claiming intellectual property rights over systems that could autonomously evaluate human workers for termination.

Uber and the "Deactivation" Precedent

Before Katerra's mass layoff, Uber had already normalized algorithmic termination for gig workers. The company's driver deactivation system uses AI to evaluate driver behavior, customer complaints, and trip data. When metrics fall below thresholds, drivers receive notifications that their accounts have been "deactivated"—functionally identical to termination, but classified as a platform policy enforcement action.

Between 2019 and 2023, Uber deactivated over 1.2 million driver accounts globally. The vast majority received automated notifications with generic language about "safety concerns" or "community guideline violations." Appeals processes exist in theory, but internal data showed that less than 3% of deactivation decisions were reversed.

[!INSIGHT] The gig economy served as a testing ground for algorithmic termination. Traditional employees are now entering the same infrastructure. The difference is that W-2 workers have legal protections that independent contractors do not—protections that automated systems can be designed to circumvent.

The structural innovation in these systems is the illusion of human authority. An algorithm recommends termination. A human manager approves the recommendation. When questioned, the company can point to human oversight. When sued, the company can argue the algorithm was merely an advisory tool.

But in practice, the humans in these loops rarely exercise meaningful judgment. At Katerra, approving 900 terminations took less time than reading this article.

The Accountability Vacuum

The proliferation of algorithmic termination tools has created what legal scholars call an "accountability vacuum"—a zone where responsibility for employment decisions becomes intentionally diffused.

Three structural mechanisms enable this diffusion:

-

The Recommendation Frame: By technically classifying AI outputs as "recommendations," companies preserve the legal fiction that humans make final decisions. Courts have historically required proof of discriminatory intent, which is difficult to establish when a human signs off on an algorithmic decision.

-

The Volume Barrier: When managers approve terminations in batches of hundreds or thousands, individual review becomes structurally impossible. The system is designed to prevent the very oversight it claims to preserve.

-

The Vendor Shield: Many companies use third-party HR platforms that provide termination recommendations. When errors occur, the company blames the vendor, and the vendor claims their tool is advisory only.

[!NOTE] The European Union's AI Act, finalized in 2024, classifies AI systems used for employment decisions as "high-risk" and requires human oversight, transparency, and the right to explanation. However, enforcement mechanisms remain untested, and U.S. companies operate under no equivalent federal framework.

The economic incentives align powerfully toward automation. A 2023 McKinsey analysis found that companies using AI-driven workforce management tools reported 15-22% reductions in HR administrative costs. For large enterprises, those savings translate to millions of dollars annually—far more than the cost of settling the occasional wrongful termination lawsuit.

Where This Is Heading

The infrastructure for algorithmic termination is expanding rapidly. Major HR platforms including Workday, SAP SuccessFactors, and Oracle HCM now offer AI-powered "workforce planning" modules that include termination scenario modeling. These tools are marketed as strategic planning instruments, but the distance between modeling and execution is shrinking.

In 2024, Salesforce announced the integration of generative AI into its Work.com platform, enabling managers to "generate performance improvement plans and termination communications" automatically. The stated purpose was efficiency and consistency. The unstated implication is that the process of ending someone's employment is being reduced to a template.

“*"We are building systems that optimize for the metric of termination efficiency. But termination is not a logistics problem. It is a human relationship problem. When we apply supply chain thinking to human beings, we should not be surprised when the results are inhumane.”

The technology industry has a consistent pattern: innovations tested on vulnerable populations eventually migrate upstream. Algorithmic termination began with gig workers, expanded to construction laborers, and is now available for corporate knowledge workers. The infrastructure exists. The legal frameworks lag behind. The economic incentives favor adoption.

Sources: Katerra Bankruptcy Court Documents, Northern District of Delaware (2021); IBM Patent US10,394,858 B1 "Predicting Flight Risk"; Uber Transparency Reports 2019-2023; McKinsey Global Institute Report on AI in HR Operations (2023); Stanford Law Review on Algorithmic Accountability (2024); EU AI Act Final Text (2024)

This is a Premium Article

Hylē Media members get unlimited access to all premium content. Sign up free — no credit card required.