The Country That Banned Algorithmic Firing

The Netherlands banned algorithmic firing. The U.S. allows at-will termination by AI. Two democracies, two futures for workers. Which will dominate the global economy?

The Netherlands told Uber its algorithm cannot fire drivers without human review. The United States said Uber can fire anyone, for any reason, at any time — including by algorithm. Both are democracies. Yet in 2024, they reached opposite conclusions about whether a machine should have the power to end someone's livelihood without a human ever reviewing the decision.

This isn't a philosophical debate anymore. In the Netherlands alone, court documents revealed that Uber's algorithmic system had deactivated over 10,000 driver accounts based on fraud detection scores — scores the drivers couldn't see, challenge, or understand. When Dutch courts demanded transparency, Uber admitted it couldn't fully explain how the system worked. The question facing policymakers worldwide is no longer whether AI will manage workers, but whether humans will have the right to intervene when it does.

In February 2023, a Dutch court delivered what privacy advocates had awaited for years: the first robust enforcement of GDPR Article 22, which grants individuals the right not to be subject to purely automated decisions with legal or significant effects.

The case centered on Uber's use of an algorithmic system that flagged drivers for "fraudulent activity" — typically unusual driving patterns, GPS anomalies, or account sharing — and automatically deactivated their accounts. Drivers received notifications stating their accounts were "permanently disabled" with no meaningful explanation of what triggered the decision.

[!INSIGHT] GDPR Article 22 had existed since 2018, but the Uber Netherlands case established a critical precedent: companies cannot claim "trade secret" protections to override fundamental human rights to explanation and human review in employment decisions.

The court ruled that Uber had violated Article 22 by failing to provide:

- Meaningful information about how the algorithm processed personal data

- Human intervention in the deactivation decision

- The ability to contest the algorithmic judgment

Uber was fined €290 million and ordered to reinstate affected drivers with back pay. But the broader impact was doctrinal: the judgment established that algorithmic management systems constitute automated decision-making under European law, triggering human review requirements.

What the Dutch Court Actually Required

The ruling didn't ban algorithmic management — it mandated human oversight. Companies can still use AI to flag potential fraud or performance issues, but a human must review the case before termination occurs. This creates what labor scholars call a "human-in-the-loop" requirement.

“"The court recognized that being locked out of the app is effectively termination. If an algorithm can end your employment, you have the right to have a human explain why and consider your side of the story.”

The practical implications are significant. Uber and similar platforms must now:

- Employ human reviewers for deactivation decisions

- Provide specific reasons (not just "fraud" categories)

- Allow drivers to present counter-evidence

- Document the human review process

Brazil's Labor Courts: The App-Based Firings That Weren't

While the Netherlands was building its case against Uber, Brazilian labor courts were developing an even more aggressive doctrine. In a series of 2022-2023 decisions, Brazil's Labor Court System (TRT) ruled that app-based terminations without human review violated constitutional protections of worker dignity.

One landmark case involved an iFood delivery driver whose account was deactivated after the app's algorithm flagged him for allegedly manipulating delivery times. The driver argued he had simply been stuck in traffic. The court not only ordered his reinstatement but declared that algorithmic termination "strips workers of their constitutional right to defense and contradicts the principle of human dignity enshrined in Article 5 of the Federal Constitution."

[!INSIGHT] Brazil's approach goes further than GDPR by grounding the right to human review not just in data protection law, but in constitutional labor rights — making it harder for companies to argue technical compliance.

Brazilian courts have increasingly classified gig workers as employees rather than independent contractors, which triggers full labor law protections including:

- Mandatory human review for terminations

- Severance pay requirements

- Unemployment insurance contributions

- Collective bargaining rights

This classification matters enormously. In the U.S., Uber classifies drivers as independent contractors who have no right to due process before deactivation. In Brazil, courts have said the economic reality — drivers depend entirely on the platform for income, follow its algorithmic instructions, and cannot negotiate terms — makes them employees in practice.

The Numbers Behind the Legal Shift

Between 2020 and 2023, Brazilian labor courts received over 12,000 cases involving app-based workers contesting algorithmic deactivations. Workers won approximately 73% of cases that reached final judgment, according to research from the Federal University of Rio de Janeiro.

The EU AI Act: Codifying Employment AI as High-Risk

The enforcement actions in the Netherlands and Brazil coincided with the European Union's passage of the AI Act in 2024, which formally classifies AI systems used in employment decisions — including recruitment, firing, and task allocation — as "high-risk."

This classification carries specific obligations:

- Risk management systems must be established and documented

- Data governance requirements ensure training data is representative and unbiased

- Technical documentation must enable third-party auditing

- Human oversight provisions require meaningful human control

- Transparency requirements mandate that workers know when AI is affecting their employment

“[!NOTE] The EU AI Act's high-risk classification for employment AI applies not just to hiring systems but to any AI that "materially influences" employment decisions”

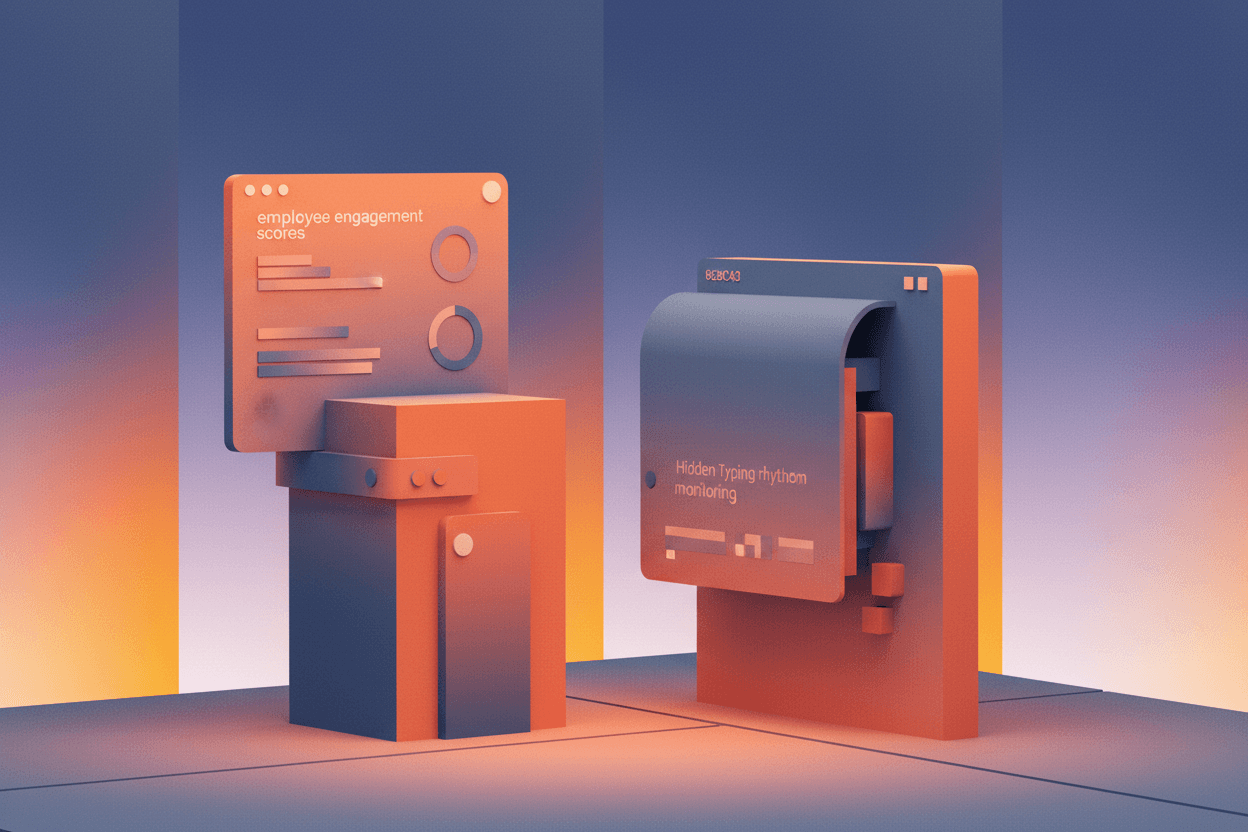

Critically, the AI Act works in tandem with GDPR. Article 22 provides individual rights (explanation, human review, contestation), while the AI Act imposes systemic requirements (risk assessments, auditing, transparency). Together, they create what scholars call "algorithmic accountability" — the principle that AI systems affecting livelihoods must be explainable, contestable, and subject to human control.

Enforcement Timeline

The AI Act's employment provisions take effect in August 2025. Companies found in violation face fines of up to €35 million or 7% of global annual revenue, whichever is higher. For a company like Uber, with $37 billion in 2023 revenue, that's a potential penalty of $2.6 billion per violation.

The American Exception: At-Will Employment Meets Algorithmic Efficiency

The United States stands alone among developed democracies in permitting what employment law scholars call "algorithmic at-will termination." The doctrine of at-will employment — which allows employers to fire workers for any reason not explicitly prohibited by statute — predates AI but has been dramatically amplified by it.

In 2023, the National Labor Relations Board (NLRB) issued a decision in Cognex Corporation that began to address algorithmic management, ruling that employers must disclose how AI systems are used in employment decisions when workers request this information during union organizing. But this is a procedural requirement, not a substantive right to human review.

“"In the U.S., we've created a system where a machine can fire you faster than a human can review the decision. That's not a technology problem”

The contrast with European and Brazilian approaches is stark:

| Right | Netherlands | Brazil | United States |

|---|---|---|---|

| Human review before termination | Required | Required | Not required |

| Explanation of algorithmic decision | Required | Required | Not required |

| Ability to contest algorithmic decision | Required | Required | Limited |

| Worker classification presumption | Employee (after ruling) | Employee | Contractor |

| Constitutional basis | Data protection | Labor dignity | None specific |

The Platform Argument

Uber and similar platforms argue that algorithmic management is essential for scale. With millions of drivers globally, they contend that human review of every deactivation would be economically unfeasible. They also argue that algorithms are more consistent than humans, reducing discriminatory firing decisions.

Research suggests the opposite is often true. A 2023 study by the Oxford Internet Institute found that algorithmic management systems can encode and amplify existing biases, particularly against non-native speakers and workers from marginalized communities who may be flagged for "communication issues" or "suspicious patterns" that reflect structural inequities rather than individual misconduct.

The Global Divergence: What It Means for Workers and Companies

The split between the EU-Brazil approach and the U.S. model creates what legal scholars call "regulatory fragmentation" — different rules in different markets. For global platforms, this means:

- Operational complexity: Maintaining different algorithmic management systems for different jurisdictions

- Competitive pressure: Companies operating in high-regulation markets may face higher costs than competitors in low-regulation markets

- Worker arbitrage: Platforms might shift investment and growth efforts toward markets with fewer restrictions

But there's also evidence that regulatory pressure drives innovation. After the Dutch ruling, Uber invested in developing explainability tools for its fraud detection system — technology that could ultimately benefit all users, not just those in jurisdictions that mandate it.

[!INSIGHT] The regulatory divergence may not be permanent. California's AB 701 (2021) requires human review for warehouse worker firings based on productivity algorithms, suggesting even U.S. jurisdictions are moving toward European-style protections.

The most significant long-term question is whether market forces or regulatory harmonization will determine the global standard. If major markets like the EU, Brazil, and potentially China mandate human oversight, platforms may adopt those standards globally rather than maintain parallel systems. Alternatively, a fragmented regulatory landscape could persist, creating a "race to the bottom" as platforms concentrate growth in jurisdictions with the weakest worker protections.

Sources: Court of Amsterdam, Uber Netherlands BV v. Workers (2023); Brazilian Labor Court System, iFood Driver Cases (2022-2023); EU AI Act, Regulation 2024/1689; Oxford Internet Institute, "Algorithmic Management and Worker Rights" (2023); National Labor Relations Board, Cognex Corporation Decision (2023); University of Amsterdam Institute for Information Law, GDPR Article 22 Enforcement Analysis (2024).

This is a Premium Article

Hylē Media members get unlimited access to all premium content. Sign up free — no credit card required.